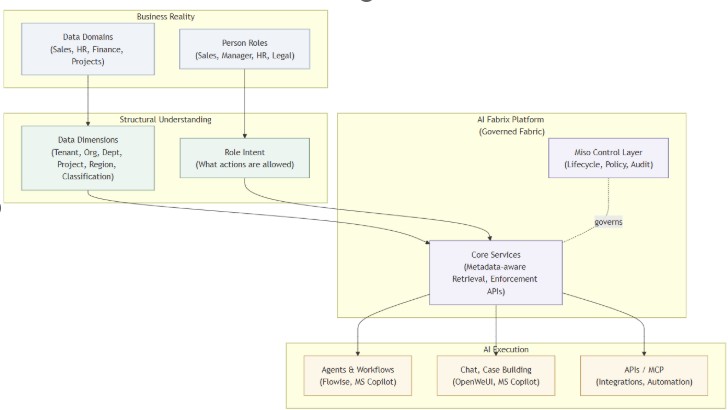

An operating model for enterprise AI.

AI Fabrix is built on a small set of structural pillars designed for governance, scale, and trust.

Most enterprise AI fails not because of models, but because AI lacks governed, contextual, per-user data. AI Fabrix solves this with CIP (Composable Integration Pipelines): an AI-native dataplane that delivers secure, permission-aware data to AI by design.

CIP is a declarative integration layer built for AI.

It replaces:

With:

Think of CIP as dbt for APIs — with enterprise security and AI context built in.

Traditional integration patterns break when AI is introduced:

CIP removes these failure modes structurally. AI never sees raw systems.

AI only receives governed, scoped, auditable data.

With CIP, enterprises can:

Traditional platforms ask:

“Who is allowed to access this system?”

AI Fabrix asks:

“What does this data represent, and who does it belong to?”

Governance is enforced because of how the platform is built —not because someone remembered to configure it.

This is how AI becomes deployable in regulated environments.

AI Fabrix runs where your data already lives.

There is no shared SaaS layer.

No external control plane.

No black box.

This is a prerequisite for enterprise trust.

AI Fabrix enforces a clear contract between humans and systems.

AI Fabrix enforces a clear contract between humans and systems.

AI is not an add-on.

It is treated as a native actor in the platform.

Openness without control creates risk. Control without openness creates lock-in.

AI Fabrix deliberately separates the two.

Most platforms manage access.

AI Fabrix enables understanding.

That is the difference between controlling AI and being able to use it.